Hold onto your stethoscopes, because Google just unveiled an AI that could revolutionize medicine as we know it! AMIE, Google’s AI doctor, can now “see” and interpret medical images like X-rays and MRIs, engaging in diagnostic conversations with stunning accuracy. Is this the dawn of a new era in healthcare, or the beginning of the end for human doctors? The diagnosis is in, and it’s mind-blowing.

Google Research has just dropped a bombshell in the world of medical AI with its latest advancements in AMIE (Articulate Medical Intelligence Explorer). This isn’t just another chatbot; AMIE is a sophisticated research AI agent designed for diagnostic dialogue, and now, it has gained the power of sight. According to a recent announcement from Google Research, multimodal AMIE can intelligently request, interpret, and reason about visual medical information like X-rays, CT scans, and MRIs during a diagnostic conversation. This breakthrough, detailed in early May 2025, suggests a future where AI could play a significantly more active and insightful role in diagnosing diseases, potentially surpassing human capabilities in certain areas.

AMIE Can See Inside the Human Body

The previous iteration of AMIE was already impressive, demonstrating the ability to conduct diagnostic conversations with patients (simulated or actor-based) with a level of empathy and accuracy that rivaled human primary care physicians in some studies. However, the inability to directly process and understand medical imaging was a significant limitation. Medical diagnosis often hinges on the interpretation of visual data – the subtle shadow on an X-ray, the anomaly in an MRI, the pattern in a CT scan. Without this capability, AI’s role in diagnostics remained incomplete.

The new multimodal AMIE addresses this gap head-on. As ArtificialIntelligence-News.com reported, Google is giving its diagnostic AI the ability to understand visual medical information. This means AMIE can now look at an X-ray you provide (in a research context), discuss its findings with you, ask clarifying questions, and integrate that visual data into its overall diagnostic reasoning. Imagine an AI that can not only listen to your symptoms but also look at your scans and tell you what it sees, all while explaining its reasoning in a clear, understandable way. This is the promise of multimodal AMIE, and it’s a giant leap towards more comprehensive AI-assisted healthcare.

How Does it Work?

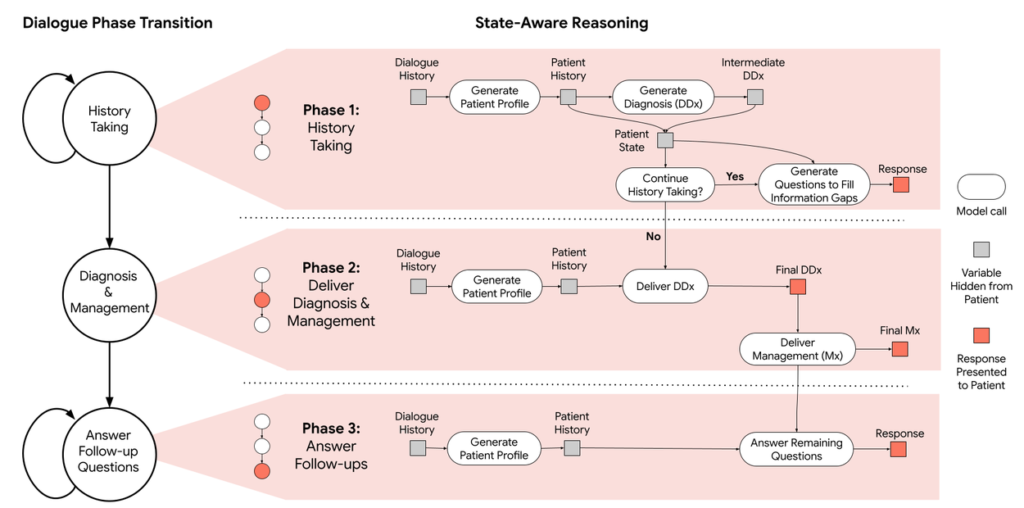

While Google keeps the deepest technical details of its proprietary models close to its chest, the research paper on multimodal AMIE sheds some light on the underlying technology. The system is built upon advanced large language models (LLMs) that have been specially fine-tuned on vast amounts of medical data, including medical textbooks, research papers, and, crucially for this new version, a massive dataset of medical images and associated reports. This allows AMIE to learn the complex patterns and correlations between visual findings, patient symptoms, and potential diagnoses.

The “multimodal” aspect means AMIE can process and integrate information from different types of inputs – text (the conversational dialogue) and images. When presented with a medical image, AMIE doesn’t just “see” pixels; it leverages its training to identify clinically relevant features, compare them against its knowledge base, and reason about their diagnostic significance. It can then articulate these findings and incorporate them into its ongoing conversation with a patient or a clinician. The YouTube channel Two Minute Papers often breaks down such complex AI advancements, and the visual capabilities of AMIE are indeed a hot topic. The goal is not just to identify abnormalities but to do so within the context of a broader diagnostic process, mimicking (and potentially exceeding) the reasoning of an experienced physician.

Is AMIE Outperforming Human Doctors?

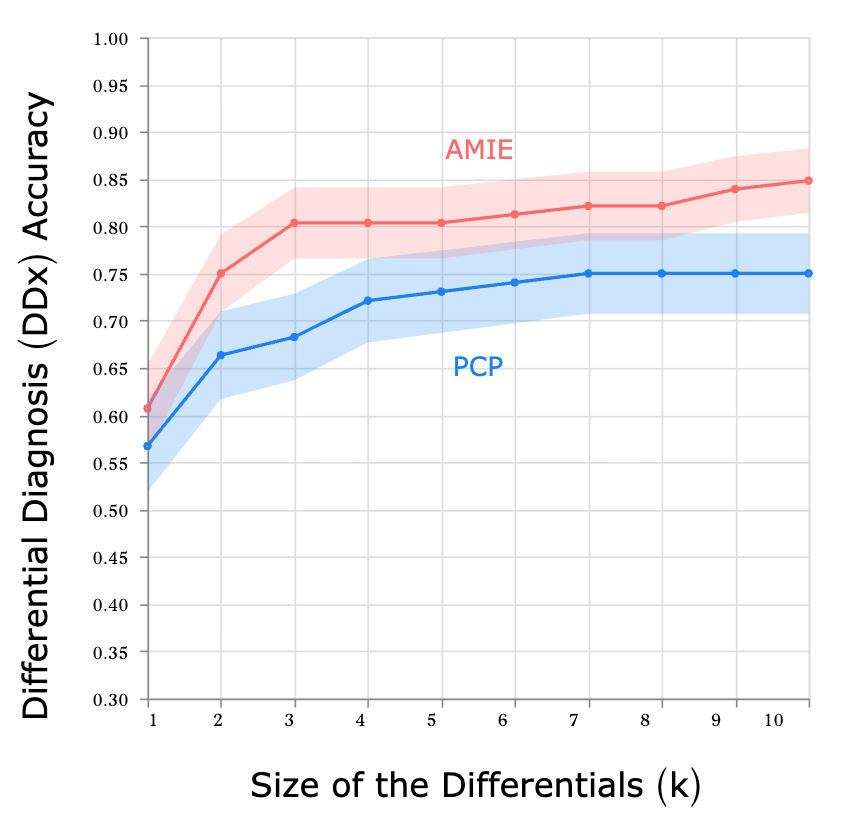

One of the most startling claims emerging from Google’s research is AMIE’s performance in diagnostic accuracy. Even before gaining visual capabilities, text-based AMIE was shown in studies to perform on par with, or in some cases, even better than, board-certified primary care physicians in terms of diagnostic accuracy and the quality of its conversational interaction. A study published in Nature (though this specific link refers to an earlier version, the principle of high performance is consistent with AMIE’s development trajectory) highlighted the potential for AI to reach expert-level diagnostic capabilities.

With the addition of multimodal understanding, AMIE’s diagnostic prowess is expected to increase significantly. The ability to directly analyze medical images, rather than relying on textual descriptions of those images, provides a richer, more direct source of diagnostic information. While Google is careful to frame AMIE as a research project and an assistive tool for clinicians, the performance metrics inevitably raise questions about the future roles of human doctors, particularly in specialties like radiology where image interpretation is paramount. As Towards Data Science noted in an article about a previous iteration, AMIE’s ability to sift through possibilities without human biases might give it an edge. The question is no longer if AI can perform complex medical tasks, but how well and what does that mean for the medical profession?

AI as a Partner, Not a Replacement (Yet?)

Google emphasizes that AMIE is intended to augment human clinicians, not replace them. The vision is one of human-AI collaboration, where AI tools like AMIE can handle initial information gathering, provide diagnostic suggestions, summarize complex cases, and free up human doctors to focus on the more nuanced aspects of patient care, such as complex decision-making, empathy, and building patient trust. AMIE could act as an incredibly knowledgeable assistant, always up-to-date on the latest medical research, and capable of tirelessly analyzing data.

Imagine a future where your doctor consults with an AI like AMIE before seeing you. The AI could have already reviewed your medical history, your latest lab results, and any relevant imaging, providing your doctor with a concise summary and a list of potential diagnoses to consider. This could lead to more efficient, more accurate, and more personalized healthcare. The Medical Futurist, Dr. Bertalan Meskó, often discusses such scenarios, highlighting the potential for AI to revolutionize how medical professionals work.

However, the “yet” in “not a replacement (yet?)” looms large. As AI capabilities continue to advance at an exponential rate, the line between assistance and autonomy will inevitably blur. If an AI can consistently diagnose more accurately and efficiently than a human in certain contexts, the economic and clinical pressures to rely more heavily on AI will be immense. This raises profound ethical, regulatory, and societal questions that we are only just beginning to grapple with.

Can We Really Trust Dr. AI?

The development of powerful medical AI like AMIE is not without its challenges and ethical considerations. Patient privacy is a paramount concern. AMIE learns from vast amounts of medical data; ensuring this data is anonymized, secure, and used ethically is crucial. Bias is another major issue. If the data used to train AMIE reflects existing biases in healthcare (e.g., racial, gender, or socioeconomic biases), the AI could perpetuate or even amplify these biases in its diagnoses and recommendations.

Then there’s the question of accountability. If an AI makes a diagnostic error, who is responsible? The AI itself? The developers who created it? The doctor who relied on its output? Establishing clear lines of responsibility and liability in AI-assisted healthcare is a complex legal and ethical challenge.

Perhaps most importantly, there’s the issue of patient trust. Will patients be comfortable interacting with an AI doctor, even one that is empathetic and accurate? Will they trust its diagnoses and treatment recommendations? Building public trust in medical AI will require transparency, robust validation, and a clear demonstration of its benefits and safety. The TimesOfAI has covered the launch, and the public reaction will be key to its adoption.

Conclusion

Google’s multimodal AMIE represents a landmark achievement in medical AI. By giving its AI doctor the ability to “see” and interpret medical images, Google has unlocked a new level of diagnostic capability that could transform healthcare. The potential benefits are enormous: faster, more accurate diagnoses, personalized treatment plans, reduced healthcare costs, and improved access to medical expertise, particularly in underserved areas.

However, the rise of Dr. AI also brings profound challenges. We must navigate the ethical minefields of privacy, bias, and accountability. We must redefine the roles of human doctors in an AI-assisted future. And we must build public trust in these powerful new technologies. The journey ahead will be complex, but one thing is clear: the AI doctor is in, and the world of medicine is on the cusp of a revolution. The question is, are we ready for it?