A new video model called Omni has surfaced inside the Gemini app just days before Google I/O 2026 opens on May 19. The leak started as a single UI string spotted on May 2, and escalated on May 11 when actual generated clips leaked from at least one Gemini Pro user’s account, including a spaghetti scene at a seaside restaurant and a professor working through trigonometric proofs on a chalkboard. At the event, Google launched Gemini 3.5, its new flagship family.

The interesting part is what Omni is not. It is not the Omni 1 macOS productivity app Google quietly shipped earlier this year, and the two have no shared lineage despite the unfortunate name collision. Omni inside the Gemini app appears to be either a rebrand of the current Veo 3.1 pipeline, a brand new in-house Gemini video model, or, in the most aggressive read, a true unified omni-model that handles text, image, video, and audio in one architecture.

This article walks through everything that actually leaked, the demo videos, the UI artifacts, the three competing theories about what Omni really is, the early performance reports, the resource cost (one user burned 86% of their daily AI Pro quota generating two clips), the tier structure rumors, and how Omni would compare to Sora 2, Veo 3.1, and ByteDance Seedance 2 if the early reports hold.

🚨 Google appears to be testing a new video model Gemini Omni.

— AshutoshShrivastava (@ai_for_success) May 11, 2026

You can.

– Remix videos

– Edit videos directly in chat

– Use ready made templates

Looks like nano-banana for video. pic.twitter.com/n7qDmeiJN4

What Actually Leaked

Two separate leaks make up the current picture, and they happened nine days apart.

The first leak was a UI string. On May 2, TestingCatalog spotted text inside the Gemini app’s video generation tab that read “Start with an idea or try a template. Powered by Omni.” The string sat next to “Toucan”, which is Google’s internal codename for the existing Veo 3.1 powered video pathway. That juxtaposition is what triggered the speculation, because it suggests staging for a swap rather than a deprecation.

The second leak was the demo videos. On May 11, at least one Gemini AI Pro subscriber had access to Omni and shared two generated clips. The video tab itself surfaced new copy promising users could “remix your videos, edit directly in chat, try a template, and more.”

That is the entirety of confirmed leak material as of May 12. Everything else is inference from the UI artifacts, comparison to Veo 3.1, and Google’s broader product trajectory.

The UI String Discovery

The “Powered by Omni” string is small but unusually informative. Google rarely surfaces internal model names in production UI, and when it does, it is usually a late stage signal rather than an early one.

A few specifics matter here. First, the placement was inside the consumer Gemini app’s video generation flow, not inside Vertex AI or AI Studio. That means whatever Omni is, Google is preparing to ship it to consumers directly, not just to developers. Second, the string used the public name “Omni” rather than a codename, which differs from how Google has handled previous Veo iterations. Toucan and similar names have stayed internal. Omni was put on a label visible to users.

Third, the staging position next to Toucan implies a transition rather than a parallel offering. If Google planned to keep Veo 3.1 as a separate option, the UI would more likely show two distinct generators. The single-button replacement language (“Powered by Omni”) reads more like a pipeline switch.

The May 11 Demo Videos

Nine days after the UI string leaked, the actual model output started circulating. Two clips have been described in detail by reporters who saw them.

The first clip was a spaghetti scene. The prompt asked for “two men at a table seaside at an upscale restaurant” eating spaghetti, a deliberate reference to the long-running “Will Smith eating spaghetti” benchmark that early video models notoriously failed. Chrome Unboxed described the result as “incredibly realistic”, with convincing physical interaction between the actors and the food, no obvious limb morphing, and proper handling of the spaghetti strands as they were lifted and twirled. This is the kind of physical-object scene that broke Sora 1 in 2024 and that Veo 3 only started handling reliably in late 2025.

The second clip was a math lecture. The prompt requested “a professor writes out a mathematical proof for trigonometric identities on a traditional chalkboard” while explaining the steps. Reporters at 9to5Google noted that the model handled both the on-screen text generation and the physical chalkboard interaction effectively, with the obvious caveat that some artificial qualities remained visible. Generated text inside video is a known weak point for current models, including Veo 3.1, and on-camera writing is a step harder again because the text needs to appear stroke by stroke in sync with hand movement.

Neither clip has been publicly released in full at the time of writing. The descriptions come from reporters who saw the outputs through the leaking subscriber’s account.

Three Theories About What Omni Is

The leak does not confirm the underlying architecture, and there are three plausible interpretations. Each has different implications for what Google announces at I/O.

Theory one. Omni is a public rebrand of Veo 3.1. Under this read, the underlying pipeline does not change, but Google retires the Veo brand from the consumer Gemini app and presents the same model under a unified Gemini-native name. This is the most boring interpretation but also the most operationally plausible, because Google has been visibly trying to consolidate its fragmented AI brand into a single Gemini-led story.

Theory two. Omni is a new Gemini-trained video model. Under this read, Omni is a distinct model trained inside the Gemini family, separate from Veo, and intended to replace or supplement Veo over time. This would explain why the UI strings differentiate Omni from Toucan rather than treating them as the same generator. It would also fit Google’s pattern of running multiple parallel research tracks and consolidating only the winning one.

Theory three. Omni is a true omni-model. Under this read, Omni is a single architecture that handles text, image, video, and audio jointly, comparable to how GPT-4o was positioned in 2024 but extended into long-form video. The name itself is the strongest evidence for this read, and it would match Google’s stated long-term goal of native multimodality. The downside is that no existing public model has demonstrated true joint training across long-form video and the other modalities at the scale of Veo or Sora, and pulling that off in one architecture is genuinely hard.

The reality is probably somewhere between theory two and theory three. The UI signals point to a new model rather than a rebrand, and the multimodal naming points to broader scope than video alone, but a fully unified architecture would be a bigger leap than Google has historically made in a single release.

Editing in Chat and the Feature Hints

The leaked Omni UI promises four things visible in the copy. Generate, remix, edit directly in chat, and use templates.

The “edit directly in chat” piece is the most interesting. The editing primitives that actually work include removing watermarks from existing clips, swapping objects within a generated scene, and rewriting whole scenes via natural language instructions. None of these are individually new (Runway and Pika have shipped versions of each), but the integration into a single conversational flow alongside generation would be a significant workflow shift, and would put Google ahead of OpenAI on this dimension since Sora 2 still treats generation and editing as separate flows.

The “templates” piece points to a more consumer-facing strategy. Google is clearly aware that most users do not want to write detailed video prompts from scratch, and templates are the same play Canva and CapCut have used to scale generative media to non-technical users. Combined with the YouTube Shorts and Workspace distribution Google already has, templates plus chat editing could create a meaningfully easier video creation flow than what currently exists in Sora’s interface.

The “remix” piece is more speculative based on the leak, but in context it most likely means taking an existing generated clip and varying it (different angle, different mood, different style) without regenerating from scratch. Veo 3.1 already supports something similar via reference images, but the Omni copy implies a more direct interaction.

Early Performance Reports

The early reports from people who have seen Omni output are mixed but lean positive on a few specific dimensions and negative on one.

On the positive side, Omni reportedly improves over Veo 3.1 on prompt adherence (the model does what you actually asked for), camera angle transitions (smoother and more deliberate), scene coherence (objects and characters stay consistent across cuts), and voice generation (cleaner audio, fewer artifacts). The math lecture clip is the cleanest evidence for the prompt adherence claim, since on-camera writing requires the model to understand the prompt at a much finer-grained level than a generic scene generator.

On the negative side, raw fidelity reportedly trails ByteDance Seedance 2, which is currently the strongest pure-fidelity video model available in early 2026. Omni’s edge appears to be on workflow and editing rather than on per-frame photorealism. Sora 2 sits between them on fidelity, behind Seedance but generally ahead of Veo 3.1 on cinematic shots.

The voice generation improvement is worth highlighting separately. Veo 3.1 already supports native audio (synced dialogue, sound effects, ambient sound), but the audio quality has been the weak spot of the otherwise strong Veo 3 release. If Omni meaningfully improves voice generation, that closes one of the most visible gaps with Sora 2.

The Compute Cost Problem

One detail from the leaked usage data deserves its own attention. The Gemini AI Pro subscriber whose account leaked the demo videos consumed 86% of their daily Omni allowance generating just the two prompts.

That is an order of magnitude higher than current Veo 3.1 cost on the same plan. AI Pro currently allows roughly 30 to 50 Veo generations per day depending on length, so two prompts taking 86% of the allowance implies Omni is roughly 12 to 20 times more compute-intensive per generation, or that Google has tightened the daily quotas substantially in preparation for launch, or both.

Chrome Unboxed reports that Google is implementing explicit usage limits for Gemini, likely due to resource-intensive models like Omni. This matters for two reasons. First, it suggests the model is genuinely large and expensive to run, which is consistent with theory two (new Gemini video model) or theory three (true omni-model). Second, it suggests the consumer pricing for video generation is about to change, and the current generosity of the AI Pro plan was a temporary state.

If you are currently using Veo 3.1 inside Gemini for any production work, the practical implication is to budget for a price increase or a tighter rate limit at I/O.

Tier Structure and API Plans

TestingCatalog’s reporting points to a likely two-tier structure mirroring the rest of the Gemini family. A Flash variant for fast and cheaper generations, and a Pro variant for the highest quality output. The current leaked clips are described as likely coming from the Flash tier rather than Pro, which is part of why the fidelity comparison to Seedance 2 is unflattering. Pro tier output, if it exists, has not leaked.

The API picture is less clear. The reporting is consistent that Omni will be available through the Gemini API and through Vertex AI for developers, and that it will function as an Agent inside AI Studio similar to how Deep Research currently does. That positioning is interesting because it implies Omni is not just a generation endpoint but a more interactive workflow tool, with longer running stateful sessions rather than single-shot prompt-response calls.

The practical implication for anyone currently building on Veo 3.1 is to avoid locking in long-term production work without budgeting for an API rename or a deprecation timeline. Vertex AI access for Omni will most likely trail the consumer announcement by two to four weeks, which fits Google’s normal pattern of consumer-first rollouts followed by enterprise availability.

How Omni Compares

Based on the leaked details and the reported early performance, here is how Omni would slot in against the current top video models if the early reports hold.

| Model | Strongest dimension | Weakest dimension | Native audio | Editing in chat |

|---|---|---|---|---|

| Gemini Omni (leaked) | Prompt adherence, editing workflow | Raw photorealistic fidelity | Yes, reportedly improved | Yes, native |

| Veo 3.1 | Cinematic camera, audio sync | Generated text in scenes | Yes | Limited |

| Sora 2 | Long form coherence, narrative | Editing flow is separate | Yes | No, separate flow |

| ByteDance Seedance 2 | Per-frame photorealism | Limited Western availability | Limited | Non |

The pattern this table makes clear is that Google is not trying to win the photorealism race. Omni’s positioning is workflow and integration, betting that “good enough fidelity plus dramatically easier editing inside the same chat” beats “best in class fidelity in a separate tool.” This is the same play Apple has run with Final Cut against more powerful but harder to use professional editors, and it has historically worked for the larger consumer market.

The other observation is that Omni’s natural distribution is enormous. Google can ship Omni-generated video into YouTube Shorts, Google Workspace, Android, and the Gemini app simultaneously, which is a distribution footprint OpenAI cannot match. Even if Omni launches at parity rather than ahead on raw quality, the distribution advantage is substantial.

Why the Name Collides With Omni 1 macOS

A quick clarification because the naming is genuinely confusing. Earlier this year Google released a small native macOS app called Omni 1, positioned as a lightweight assistant for desktop. That app is unrelated to the Gemini Omni video model that just leaked. Different team, different release track, different product category. The shared name appears to be a coincidence rather than a deliberate brand link, though it is a coincidence that will probably cause user confusion at launch.

If you see “Omni” referenced in the context of video generation, it means the new Gemini video model. If you see “Omni 1” referenced in the context of a macOS app, it means the small desktop assistant from earlier in the year.

What to Expect at Google I/O 2026

Google I/O 2026 runs May 19 and 20, exactly one week from the time of writing. Google also unveiled the Googlebook at its May 12 Android Show, which we cover in our MacBook vs Googlebook vs Chromebook guide. Based on the leak pattern and Google’s recent keynote structure, the most likely Omni-related announcements are:

- Official Omni reveal with demos. A live keynote demo of generation and chat-based editing is almost certain given how prominent video is in Google’s current strategy.

- Tier structure and pricing. Confirmation of the Flash and Pro split, with new pricing for AI Pro and likely a higher tier (possibly Ultra) gated to higher quotas.

- API and Vertex AI timeline. Developer availability is likely staged after the consumer launch by a few weeks.

- Distribution into YouTube and Workspace. Concrete integration announcements, particularly into Shorts creation and Workspace presentations.

- Clarification of Veo’s future. Whether Veo continues as a developer-only brand or is fully absorbed into Omni.

What is unlikely to be announced is true cross-modal architecture details. Google does not normally publish architecture papers at I/O, and if Omni is in fact a unified omni-model, the technical details will probably trickle out via DeepMind blog posts and a paper in the weeks following.

How to Try Frontier AI Models Today

The Omni leak is a useful reminder that the frontier model landscape is moving fast enough that no single subscription gives you access to everything that matters. Veo 3.1 lives inside Gemini. Sora 2 lives inside ChatGPT. Seedance 2 is gated to ByteDance’s tooling. Claude Opus 4.6 lives inside Claude. DeepSeek V4 Pro lives inside DeepSeek’s chat. To use the right model for the right task, most power users end up paying for several subscriptions in parallel.

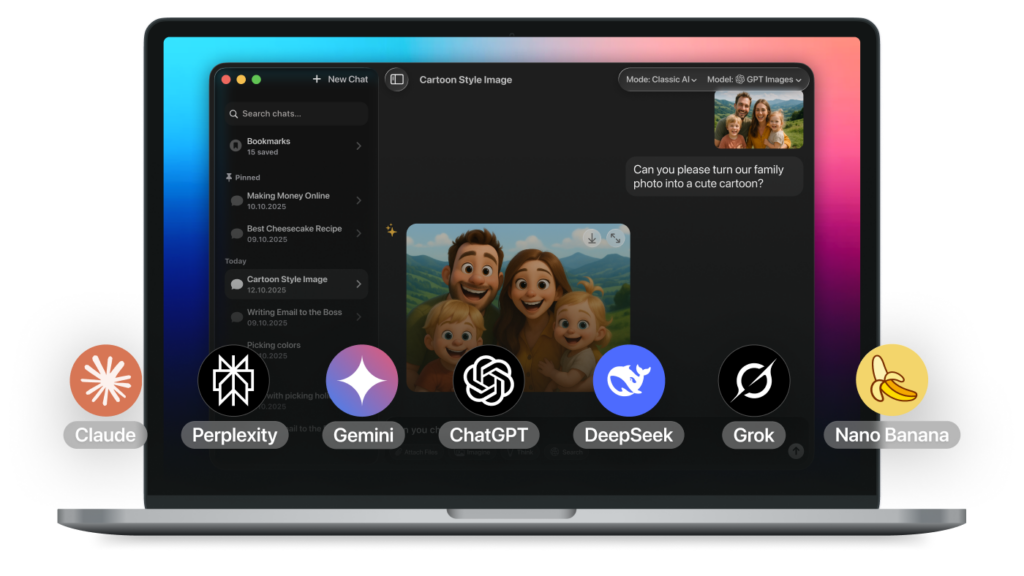

Fello AI takes a different approach. It is a lightweight native app for Mac, iPhone, and iPad that bundles Claude 4.6, GPT-5, Gemini 3.1 Pro, DeepSeek V4 Pro, Llama 4, Perplexity, and the rest of the major models into a single interface. The app is around 30 to 50MB, uses negligible RAM, and runs on any Apple silicon device. Instead of switching between four chat apps and managing four bills, you get every frontier model in one place. When Omni officially launches and the Gemini API gets updated, Fello AI users will get access to it through the same interface they already use.

The Omni leak itself will be officially confirmed (or quietly contradicted) in seven days at Google I/O. We will update this article with the actual specs, tier pricing, and demo links once the keynote ends.