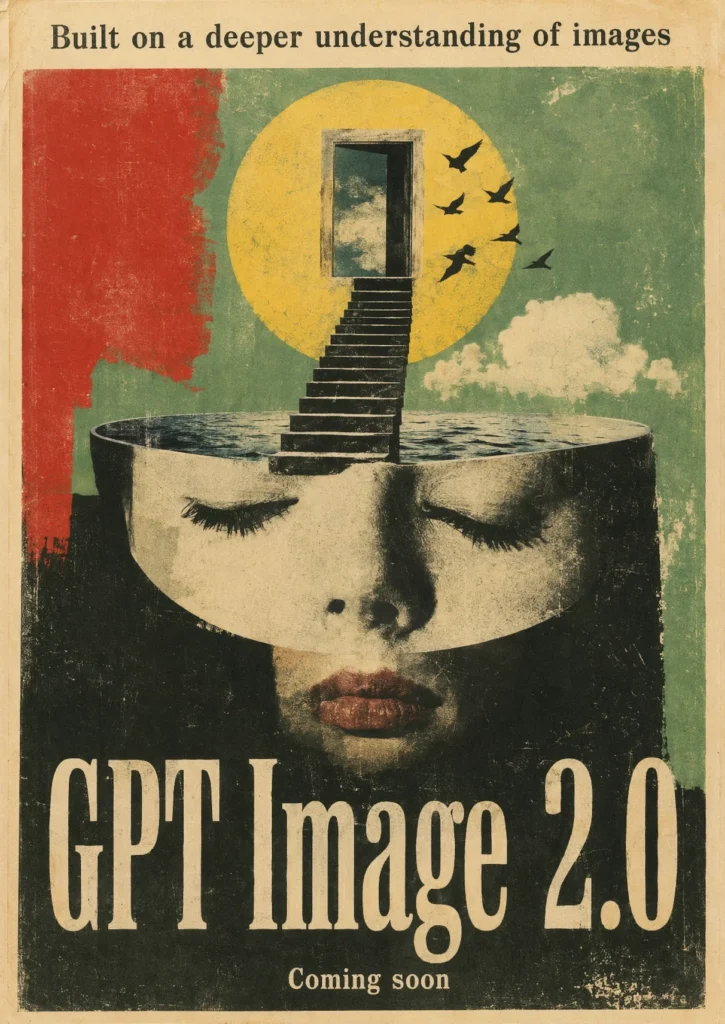

After weeks of leaks and LM Arena sightings, OpenAI shipped ChatGPT Images 2.0 on April 21, 2026. The underlying model, gpt-image-2, is live in ChatGPT, in Codex, and in the API.

This is the first OpenAI image model with built-in reasoning, the first that can render dense text in Japanese, Korean, Chinese, Hindi, and Bengali, and the first that can search the web before drawing a single pixel. It also moves AI image generation past the “inspiration board” phase into something you can actually use as production assets.

What’s Actually New

ChatGPT Images 2.0 replaces the GPT-4o image pipeline that has powered ChatGPT for the past year. The knowledge cutoff is December 2025, so the model correctly renders current brand logos, product designs, geographic maps, and recent cultural references that the older model would hallucinate or get stuck on a 2024-era version of.

OpenAI calls it an interactive creative engine rather than a one-shot generator. That framing matters because reasoning, web search, and image generation now run in a single loop, and the model can refine its output across multiple passes inside the same request rather than forcing you to regenerate from scratch.

Concrete improvements:

- Up to 2K resolution (2048 pixels wide) per output

- Aspect ratios from 3:1 to 1:3, continuous rather than fixed presets

- Up to 8 consistent images from one prompt, with shared characters, lighting, and style

- Functional QR codes that scan with any phone camera

- Web search during generation

- Self-verification before output

- Transparent background exports for direct pipeline integration

- Sharp small text, UI elements, iconography, and dense compositions

The LM Arena codenames “maskingtape” and “gaffertape” turned out to be early test variants. “Duct tape” was the instant variant. “Packingtape” was the thinking-mode build.

Why Text Rendering Was the Hard Problem

For three years, every major image model has treated text as a kind of texture. The model learned that “letters look like this” without learning what specific words mean as glyphs. That’s why GPT-4o, Midjourney V7, and DALL-E 3 all produced posters with garbled “WELCOOMM” text instead of “WELCOME” and why dense layouts (menus, infographics, packaging) collapsed into nonsense.

ChatGPT Images 2.0 handles text as a first-class element. Reviewers tested it against menu cards, conference badges, product packaging, and editorial layouts. The model holds typography, kerning, hierarchy, and spelling. Headlines stay sharp at 2K. Small captions remain legible. SKUs, dates, prices, and labels follow the prompt instead of getting auto-corrected to plausible-looking gibberish.

This is the change that turns AI image generation from “good enough for a mood board” into “good enough for the actual deliverable.”

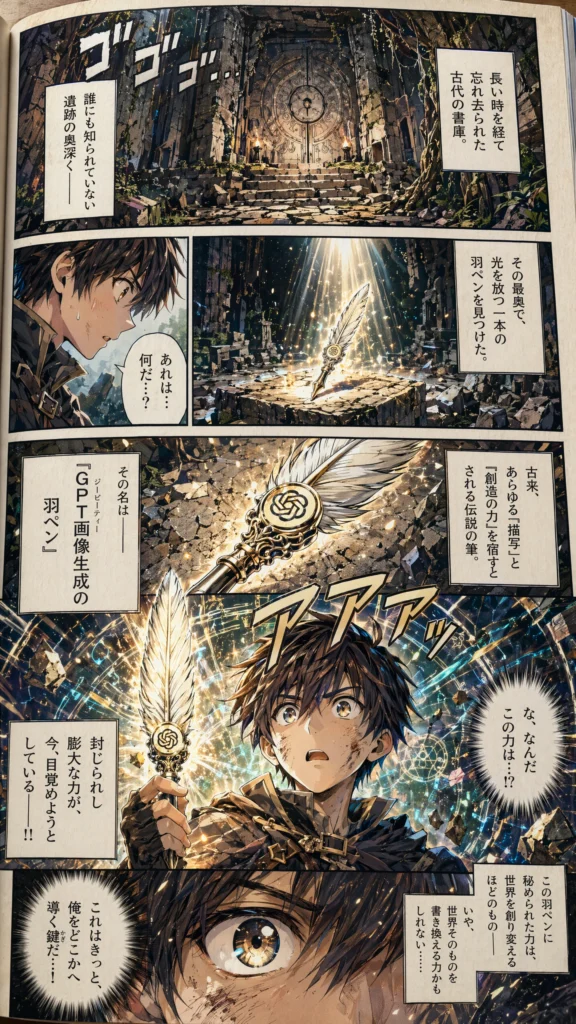

Thinking Mode

ChatGPT Images 2.0 ships in two modes.

Instant Mode generates quickly, prioritizing speed. It includes the full text rendering and multilingual improvements. This is what every user gets by default.

Thinking Mode adds a reasoning pass before generation. The model can search the web, analyze materials you upload (PDFs, screenshots, brand guidelines), reason through layout before drawing, generate up to 8 coherent outputs, and double-check its own outputs before returning them. It can take up to two minutes for a complex prompt, where Instant Mode returns in seconds.

This is what enables the page-long manga sequences, multi-frame storyboards, and full infographics flooding social media since launch. The reasoning pass is also why a single prompt like “design a 4-slide deck explaining quantum tunneling” actually produces 4 coherent slides with consistent design language, instead of 4 unrelated images that happen to share a topic.

Thinking Mode is the part of the launch nobody quite saw coming. It’s effectively a reasoning model with a paintbrush.

Functional QR Codes and Why They Matter

QR codes seem like a parlor trick until you understand what they prove. A QR code is a precise mathematical encoding. One wrong square and the code fails to scan. Every previous image model produced QR-code-shaped images that didn’t actually work.

ChatGPT Images 2.0 generates working QR codes because the thinking-mode reasoning pass actually computes the encoding before drawing. The model can also style the QR with brand colors, embed a logo in the center, and place it inside a fully designed poster. For marketing teams that previously needed a QR generator, a designer, and a layout tool to ship a single piece of collateral, this collapses three steps into one prompt.

It also signals what the architecture can do beyond images. Anything that requires precision rather than vibes, scientific diagrams, technical schematics, accurate maps, becomes plausible.

Resolution and Aspect Ratios

| Spec | GPT Image 1 (GPT-4o) | ChatGPT Images 2.0 |

|---|---|---|

| Max resolution | 1024 x 1024 | 2048 x 2048 (2K) |

| Aspect ratios | 1:1, 2:3, 3:2 | 3:1 to 1:3 (continuous) |

| Images per prompt | 1 | Up to 8 |

| Web access | Non | Yes (thinking) |

| Self-verification | Non | Yes (thinking) |

| Knowledge cutoff | October 2024 | December 2025 |

| Transparent backgrounds | Limited | Yes |

The 3:1 to 1:3 range covers wide banners, slides, posters, Stories, thumbnails, and TikTok verticals, all from the same model with no upscaling or cropping required. That eliminates the awkward “generate square, crop in Photoshop, lose half the composition” workflow that has been standard since DALL-E 2.

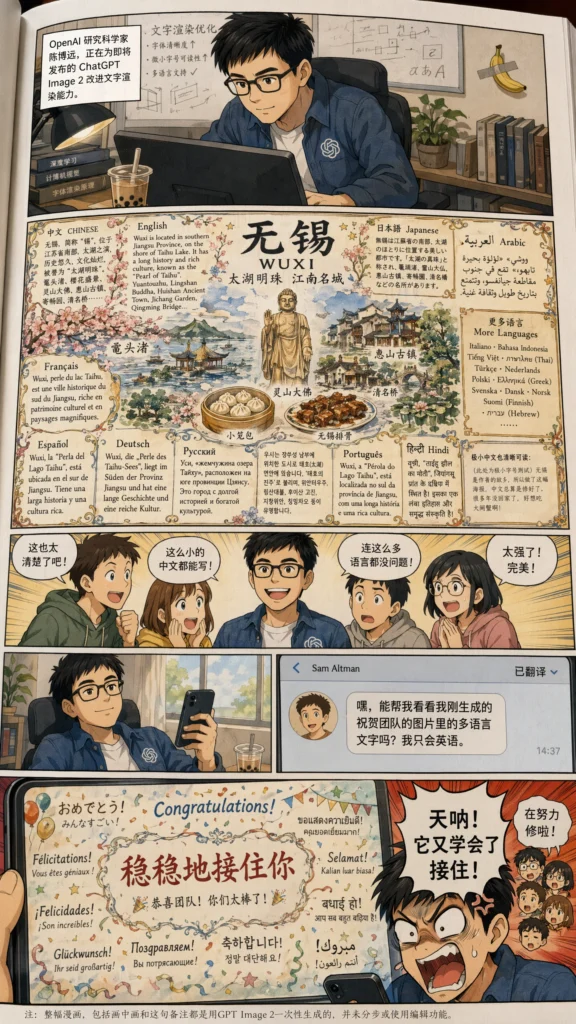

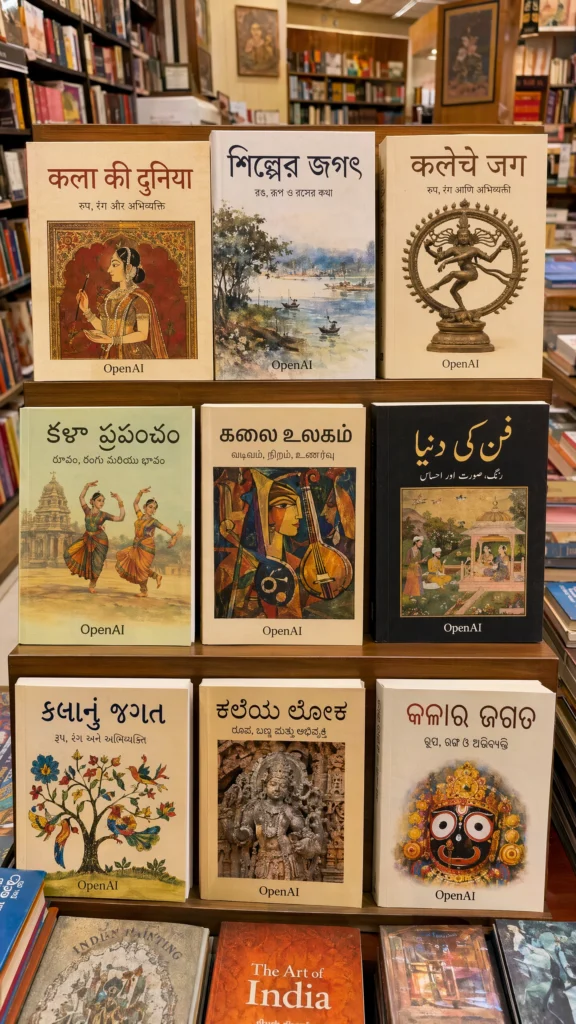

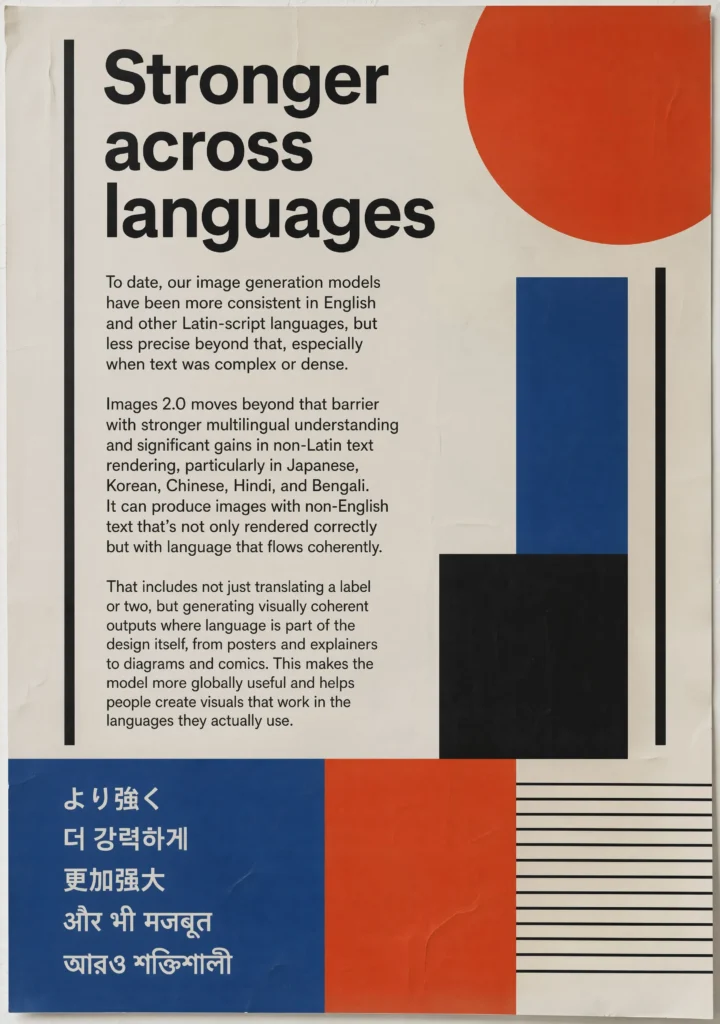

Multilingual Text

OpenAI specifically called out Japanese, Korean, Chinese, Hindi, and Bengali as languages with significant gains. Reviewers tested it and the results read as native text, not the gibberish characters every previous image model produced.

The deeper change is mixed-script handling. The model can render a Japanese poster with Latin product names, an Arabic restaurant menu with Western prices, or a Chinese movie subtitle layered over an English title. Mixed-script layouts have been broken in every commercial image model until now.

For non-English markets, this is the first AI image model usable for production work outside the Latin alphabet. Bengali, Hindi, and Devanagari typography in particular were essentially unusable in DALL-E 3 and Midjourney.

Pricing and API

The gpt-image-2 API is live. Pricing is token-based:

| Token Type | Price |

|---|---|

| Image input tokens | $8 per million |

| Image output tokens | $30 per million |

Final cost per image depends on quality tier and resolution. Per-image estimates at standard 1024 x 1024:

| Quality Tier | Cost per Image |

|---|---|

| Low | ~$0.006 |

| Medium | ~$0.053 |

| High | ~$0.211 |

2K outputs cost more in proportion to the extra tokens. There are two API surfaces: the Image API (model ID gpt-image-2) for one-shot generation and editing, and the Responses API with image generation as a built-in tool. Developers who want their app to track ChatGPT’s production version can use the chatgpt-image-latest alias, which auto-updates.

Some API accounts will need OpenAI Organization Verification before the endpoints become callable.

Availability by Plan

| User Tier | Standard | Thinking Mode |

|---|---|---|

| Free ChatGPT | Yes | Non |

| ChatGPT Plus | Yes | Yes |

| ChatGPT Pro | Yes | Yes (highest limits) |

| ChatGPT Business | Yes | Yes |

| ChatGPT Enterprise | Yes | Coming soon |

| Codex users | Yes | Yes |

| API (gpt-image-2) | Yes | Yes |

Mobile rollout is happening through the latest ChatGPT app update. DALL-E remains available inside ChatGPT but is now positioned as a secondary option for users with existing prompt libraries or specific stylistic needs that DALL-E happens to nail.

How It Compares

Hands-on coverage from VentureBeat, TechCrunch, and TechRadar puts it against the field as follows:

| Capability | ChatGPT Images 2.0 | Midjourney V8 | Nano Banana Pro | Flux 2 |

|---|---|---|---|---|

| Photorealism | Excellent | Excellent | Very good | Excellent |

| Text rendering | Best in class | Good | Very good | Good |

| Multilingual text | Best in class | Limited | Good | Limited |

| Reasoning before generation | Yes | Non | Non | Non |

| Web search | Yes | Non | Non | Non |

| Multi-image consistency | Up to 8 | Limited | Up to 4 | Limited |

| Max resolution | 2K | 2K (upscaled) | ~1.5K | 2K |

| API access | Yes | Non | Yes | Yes |

ChatGPT Images 2.0 leads on text accuracy, multilingual support, and reasoning workflows. Midjourney still has an edge on certain stylized aesthetics, particularly painterly and illustrative work where its older preference-tuned dataset shows. Nano Banana Pro is closer on photorealism than expected and still wins on raw speed. Flux remains the strongest open-weights option for teams that need to self-host.

The Image Arena Numbers

A day after launch, Arena.ai published the leaderboard results and they’re not close. GPT-Image-2 took #1 in every Image Arena category with the largest margins ever recorded on the platform:

| Category | GPT-Image-2 Score | Lead Over #2 |

|---|---|---|

| Text-to-Image (overall) | 1512 | +242 over Nano-banana-2 |

| Single-Image Edit | 1513 | +125 over Nano-banana-pro |

| Multi-Image Edit | 1464 | +90 over Nano-banana-2 |

The +242 point lead in Text-to-Image is the largest gap Arena has ever recorded. For context, model upgrades typically move the leaderboard 30 to 60 points.

GPT-Image-2 also swept all 7 Text-to-Image sub-categories, beating its own predecessor GPT-Image-1.5 by huge margins:

- Product, Branding & Commercial Design: +277 points

- 3D Imaging & Modeling: +274 points

- Cartoon, Anime & Fantasy: +296 points

- Photorealistic & Cinematic Imagery: +247 points

- Art: +197 points

- Portraits: +296 points

- Text Rendering: +316 points

The +316 point jump on Text Rendering is the headline number and confirms what reviewers were saying anecdotally. The model didn’t just improve on text. It moved the entire ceiling.

Models it beat across the board: Nano-banana-2, Nano-Banana-Pro variants, MAI-Image-2, Reve-V1.5, and Grok-Imagine-Image.

What It Still Can’t Do

Not everything is solved. Reviewers flagged a few rough edges:

- Region-selected edits are imprecise. When you mask a specific area and ask for a change, the edit can bleed beyond the mask. For precision work, a written prompt describing what to change is currently more reliable than a spatial selection.

- Thinking Mode is slow. Two minutes for a complex prompt is fine for production work but kills it for casual exploration. Most users will stay in Instant Mode for everyday prompts.

- Highly stylized illustration. Midjourney’s painterly aesthetic still has an edge for editorial illustration and book covers where mood matters more than accuracy.

- Live video and animation. This is a still-image model. Sora handles motion separately.

- Brand likeness consistency across long sessions. Eight images from one prompt are consistent. Twenty across multiple sessions still drift.

Use Cases

Workflows OpenAI highlighted, most already tested by reviewers:

- Marketing creative: banner ads, social graphics, product mockups across formats from a single prompt

- Infographics and slides: full information-dense visuals with accurate small text, charts, and data labels

- Storyboarding and game prototyping: 8-frame consistent character sequences with shared lighting and pose continuity

- Manga and comic pages: multi-panel layouts with consistent characters and readable speech bubbles

- Educational diagrams, maps, scientific illustrations: accurate labels, geographic features, scale

- UI and product mockups: legible interface text and realistic component spacing

- Branded QR codes: codes that actually scan, embedded inside fully designed posters

- Localized creative: native-language posters, ads, and packaging for international markets

- Editorial and book covers: typography-heavy layouts with non-Latin scripts

For agencies, this collapses three or four tools (image generator, layout app, typography editor, QR generator) into one prompt. For solo creators, it’s the difference between needing a designer and not needing one.

Why Codex Labs Launched the Same Day

OpenAI also launched Codex Labs on April 21, a technical training and integration service for organizations adopting Codex. The same-day launch is not a coincidence. Both products signal the same shift: OpenAI is moving from generic chat assistants to specialized agents that own complete workflows. ChatGPT Images 2.0 owns the visual creative loop. Codex owns the engineering loop. Codex Labs is the consulting arm that helps enterprises actually deploy them.

This pattern, ship a strong specialist model, then ship the services that get it into production, is what Anthropic and Google have been doing for the past year. OpenAI catching up on the enterprise side is arguably more strategically important than the image model itself.

How to Try It

For a full walkthrough with prompt formulas and use cases for teachers, students, marketers, and office workers, see our complete guide on how to use ChatGPT Images 2.0.

- In ChatGPT or Codex: open a new chat and prompt. Free users get Instant Mode. Plus and Pro users can switch to a thinking model for reasoning, web search,….

- On mobile: update to the latest ChatGPT app. The image generator now defaults to Images 2.0.

- In the API: use the

gpt-image-2model ID, orchatgpt-image-latestif you want the production-tracked alias. Same endpoint structure asgpt-image-1. - In Fello AI: ChatGPT, Claude 4.6, Gemini 3, and DeepSeek live in one lightweight native app for Mac, iPhone, and iPad. GPT-Image-2 support will be added in upcoming days. Try Fello AI.

Final Take

The leaks understated this one. Pre-release rumors focused on text rendering and aspect ratios, both of which delivered. Nobody predicted OpenAI would bolt a full reasoning model onto the image pipeline and ship web search, self-verification, functional QR codes, and 8-image consistency at the same time.

For the first time, an image model isn’t just generating pixels. It’s planning, researching, drafting, and revising. That’s a different category of tool, and it’s going to reshape the workflow for every team that produces visual content.